Introduction:

Today, we are doing an experiment to verify if it is possible to understand and classify image styles (i.e) we want to identify the look and feel of the image. This is slightly different from the generic image classification projects where we look for an object (or lack thereof) in the image.

Image style features are complex and difficult to explain/model, so we suggest a neural network architecture so that the complex features can learned automatically.

Literature Review:

Sadly, there are only a few papers on image style classification, a lot many of them focusses on image style transfer, which is more complex compared to style classification. A recent paper [1] on image recommender system using image style recognition suggests the use of deep features and compact convolutional transformers to recognize image style and then a recommendation system based on the above learnt features. One of them [2] (2021) focusses on clothing commodity style classification on ecommerce domain where the idea is to classify into two labels business and sports. The paper suggests using random walk to separate foreground then use HOG for feature extraction and use SVM for classification. Another paper [3] (2022), focusses on architecture style classification. Here they use deformable part-based models (DPM) to capture morphological characteristics of basic architectural components and propose multinomial latent logistic regression (MLLR) to classify into multiple classes. This paper [4] (2017) focusses on artistic style classification and recommends using ResNet with bagging. Another one [5] on artistic style classification, uses VGG-19 for feature extraction followed by calculating deep correlation features and an SVM for classification. There are also a couple of blogs/articles on image style classification, the closest to our experiment is [6] where they try to identify whether image has a ‘BMW’ style (i.e) not focus on the object but identify a BMW look and feel. They achieved a 95% accuracy using ResNet model.

Data:

We have around 4000 images from two categories, newer style and older style.

Unfortunately, due to privacy issues, we cannot upload the actual data samples. Instead, we are uploading similar images found on Google.

Some examples of the data:

a)

Visually, we can determine that the newer style images are more eye-catching with minimal but bold features.

The raw data contains images in various format (jpg, png, webp etc..) as well as some duplicates with the labels as well as across. This has been cleaned.

The now cleaned data has 1830 images under old style label and 1903 images under new style label.

Approach:

The objective is to classify an image based on it’s style. We have two categories, newer style and older style.

Based on the literature review and the data available, we propose the following approach. We use transfer learning on established CNN architecture trained on ImageNet dataset. Therefore, we will go forward with the following experiments:

Train a VGG-19 model (transfer learning).

Train a ResNet-50 model (transfer learning).

As these models are relatively light weight, it’s more feasible to deploy and maintain as compared to the other recent classification models.

Additionally, once the model is trained, we want to make sure the model has learnt the proper features rather than some shortcuts. We can do this with the help of Grad-CAM. It helps us visually validate where our network is looking and what patterns are triggering the activations. If the validation fails, we know that the model hasn’t learnt the patterns we need and it is not ready for deployment.

Project Setup:

Software:

Ubuntu 20.04

Python 3.8

Tensorflow 2.10

Hardware:

GCP VM instance

1 Tesla T4 GPU

8 Intel(R) Xeon(R) CPU @ 2.30GHz

Directory Structure:

Experiments:

Data:

Cleaned dataset contains 3733 images. Split the dataset three ways (train, val, test) with each having 70%, 20% and 10% of the data

Augment training data using tf.keras’s ImageDataGenerator class. Rotation, Zooming, width & height shift and shearing are the techniques used

Models:

ResNet50:

Build ResNet50 model trained on ImageNet

Chop off the FC layers and freeze weights on the conv layers

Add a dense layer and a softmax layer

VGG19:

Build VGG19 model trained on ImageNet

Chop off the FC layers and freeze weights on the conv layers

Add two dense layers and a softmax layer

Build one of the above models

Compile the model with Adam Optimizer and categorical cross entropy loss

While training, keep note of the validation loss

Save model if val_loss is less than previous epoch

Reduce learning rate if no improvement in val_loss after a set number of epochs

Stop early if no improvement in val_loss after a set number of epochs

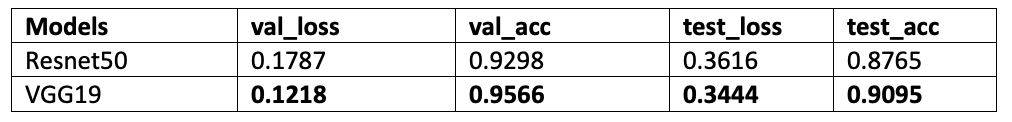

From the results we see that VGG19 is slightly better than ResNet 50. Let us also check and make sure the model has learnt the appropriate patterns.

We will run one of the images shown above through grad cam and visualize the output:

Grad CAM generated heatmap superimposed on original image

We see that the activated features are slightly above the bold text as well as the text near the right bottom. As the input image is of size (3000, 3000) and input to the model is (224, 224), we can reasonably say that the activation features are visually displaced due to the resizing methodology. The activated features are also the features that a human would look for to identify the style difference. So we can conclude that the model has learnt the correct patterns.

Citations:

[1] Somaye Ahmadkhani and Mohsen Ebrahimi Moghaddam"Image recommender system based on compact convolutional transformer image style recognition," Journal of Electronic Imaging 31(4), 043054 (27 August 2022). https://doi.org/10.1117/1.JEI.31.4.043054

[2] Zhang, Y., Song, R. (2022). Research on the Style Classification Method of Clothing Commodity Images. In: Hassanien, A.E., Xu, Y., Zhao, Z., Mohammed, S., Fan, Z. (eds) Business Intelligence and Information Technology. BIIT 2021. Lecture Notes on Data Engineering and Communications Technologies, vol 107. Springer, Cham. https://doi.org/10.1007/978-3-030-92632-8_38

[3] Wang, B., Zhang, S., Zhang, J. et al. Architectural style classification based on CNN and channel–spatial attention. SIViP (2022). https://doi.org/10.1007/s11760-022-02208-0

[4] Recognizing Art Style Automatically in painting with deep learning, Proceedings of the Ninth Asian Conference on Machine Learning 2017 , Adrian Lecoutre, Benjamin Negrevergne, Florian Yger

[5] Image Style Classification based on Learnt Deep Correlation Features, 2018, Wei-Ta Chu, Yi-Ling Wu

[6] https://blog.issart.com/classification-of-image-style-using-deep-learning-with-python/